Is GPT-4.5 any better for writing?

My side-by-side comparison of GPT-4o vs GPT-4.5 for writers and content creators.

OpenAI just dropped GPT-4.5 a few hours ago, and the AI world is buzzing with excitement—or at least that's what OpenAI is hoping for. This release wasn't exactly a surprise. With Claude 3.7 Sonnet, Grok3, and OpenAI's own Deep Research feature all hitting the market recently, the pressure was on for OpenAI to counterattack with something new.

According to OpenAI's announcement, GPT-4.5 is "their largest and best model for chat yet." They're marketing it as a breakthrough in natural conversation, claiming it "improves its ability to recognize patterns, draw connections, and generate creative insights." The buzzwords are all there: better emotional intelligence, reduced hallucinations, improved ability to follow user intent, and "greater EQ."

But here's what matters for us content creators: they're specifically positioning it as better for writing tasks. OpenAI claims interactions with GPT-4.5 "feel more natural" and that its broader knowledge and improved ability to follow intent make it especially "useful for tasks like writing."

That's a big claim—and one I immediately wanted to put to the test. As someone who spends hours working with these models for content creation, I care less about benchmark scores and more about real-world performance.

Does GPT-4.5 actually deliver better writing output than GPT-4o?

Let's find out.

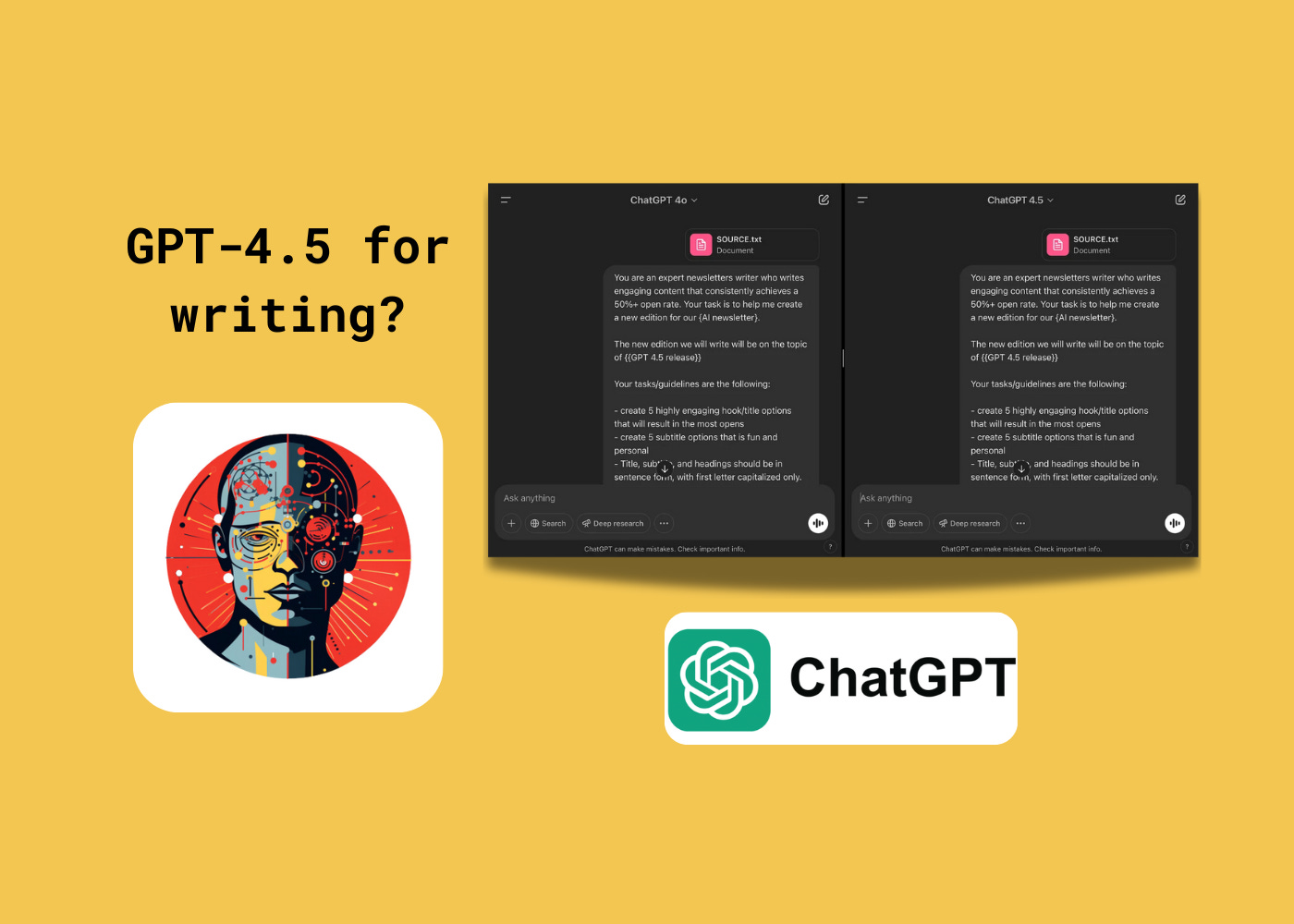

Behind the test: how I compared the models

To test these models fairly, I set up a side-by-side comparison using the exact same prompt for both GPT-4.5 and GPT-4o. I chose newsletter writing as our test case—something directly relevant to what many of us are doing with these tools.

The test was simple: I provided both models with identical instructions to write a newsletter about the GPT-4.5 release, using OpenAI's announcement text as the source material. This allowed me to directly compare how each model interpreted the same task and structured its response.