ChatGPT finally has project-based memory.

After watching Claude Projects dominate the "actually useful for work" category, OpenAI rolled out their version. And if you've been using Claude Projects, this will feel somewhat familiar.

Projects in ChatGPT are dedicated workspaces that maintain context across all conversations within that project.

The key differences:

Regular ChatGPT: Start fresh every conversation

Custom GPTs: Specialized tool with static instructions

Projects: Workspace with persistent files and evolving context

Each project now remembers key information from previous chats within that project.

It's essentially the same thing Claude launched just a few weeks ago. Same concept, same benefits, slightly different interface.

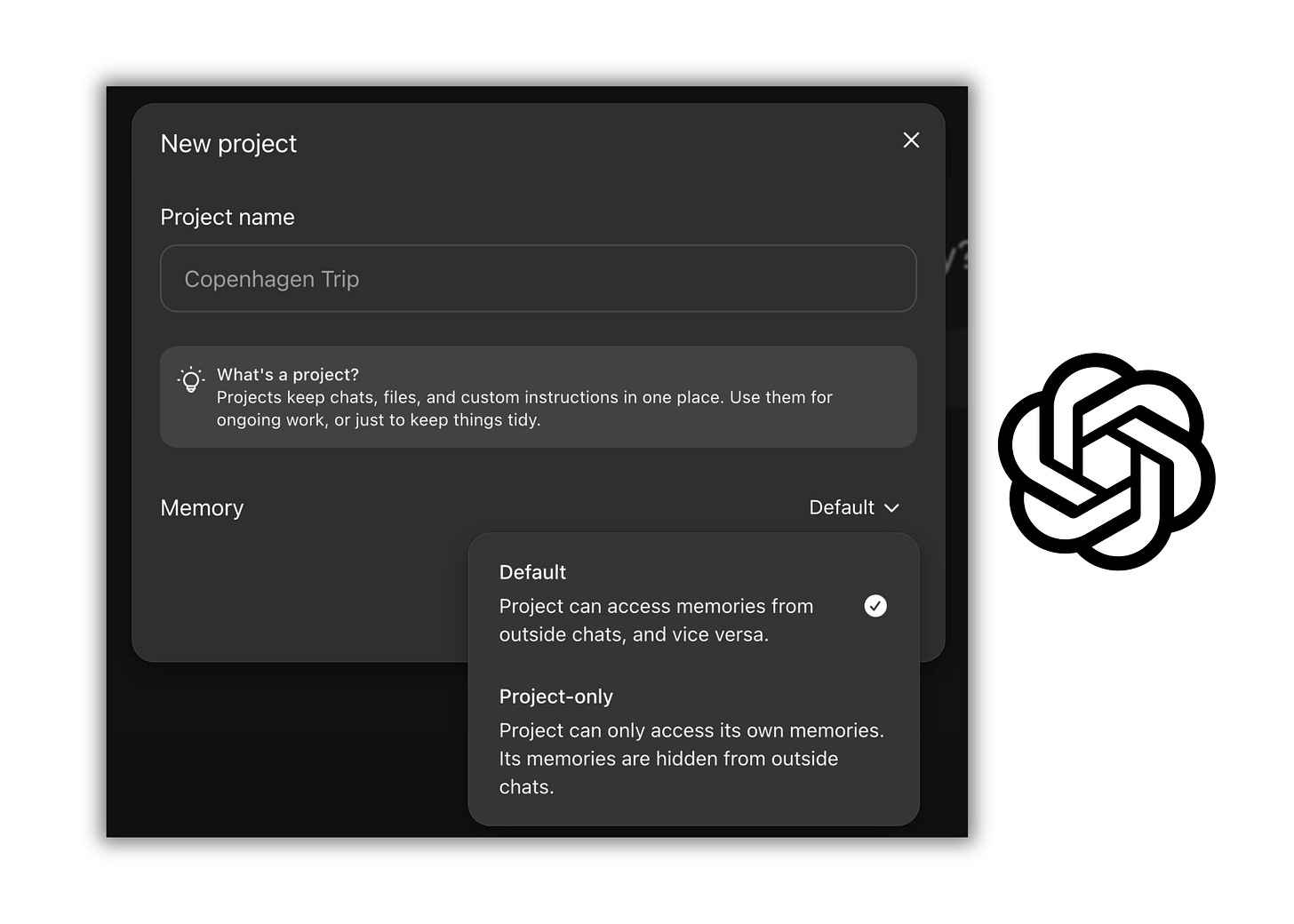

But there is one critical difference between ChatGPT and Claude

Here's what actually matters:

Claude Projects: You can explicitly ask it to reference past conversations. "Based on our previous discussion about X" or "Remember when we worked on Y?" — Claude will actually go find and reference those specific chats.

ChatGPT Projects: It creates automatic memory logs — little snippets it decides are important. But it doesn't actually review your full conversation history. It's more like sticky notes than a filing cabinet.

For this reason, I still think Claude is way superior for complex, ongoing work. ChatGPT's approach is more like "here's what I think was important" versus Claude's "let me check what we actually discussed."

But ChatGPT Projects is still a massive improvement over nothing.

Why every LLM is moving this direction

This isn't coincidence.

Professional AI use requires persistent context.

Nobody wants to re-explain their work 50 times a day.

Nobody wants to upload the same files every conversation.

Nobody wants to lose great outputs in a sea of chat history.

The market has spoken: Random chats don't work for real work.

Every major LLM will have this feature. It's not optional anymore.

Here’s how you can set this up strategically

Here are some ways to structure your projects for maximum leverage:

Client Projects — One for each client:

Project name: "[Client Name] Content"

Purpose: All content for that specific client

What goes in: Their voice guidelines, past content, brand rules, target audience

Process Projects — One for each repeatable task:

Project name: "Newsletter Writing" or "LinkedIn Posts" or "Email Sequences"

Purpose: Optimized for that specific format

What goes in: Format templates, past examples, what performs well

Catch-All Project — Your general workspace:

Project name: "Content Workshop" or similar

Purpose: Testing, experiments, one-offs

What goes in: General resources, reference materials

This is great, but you should also use my 4-layer system for projects.

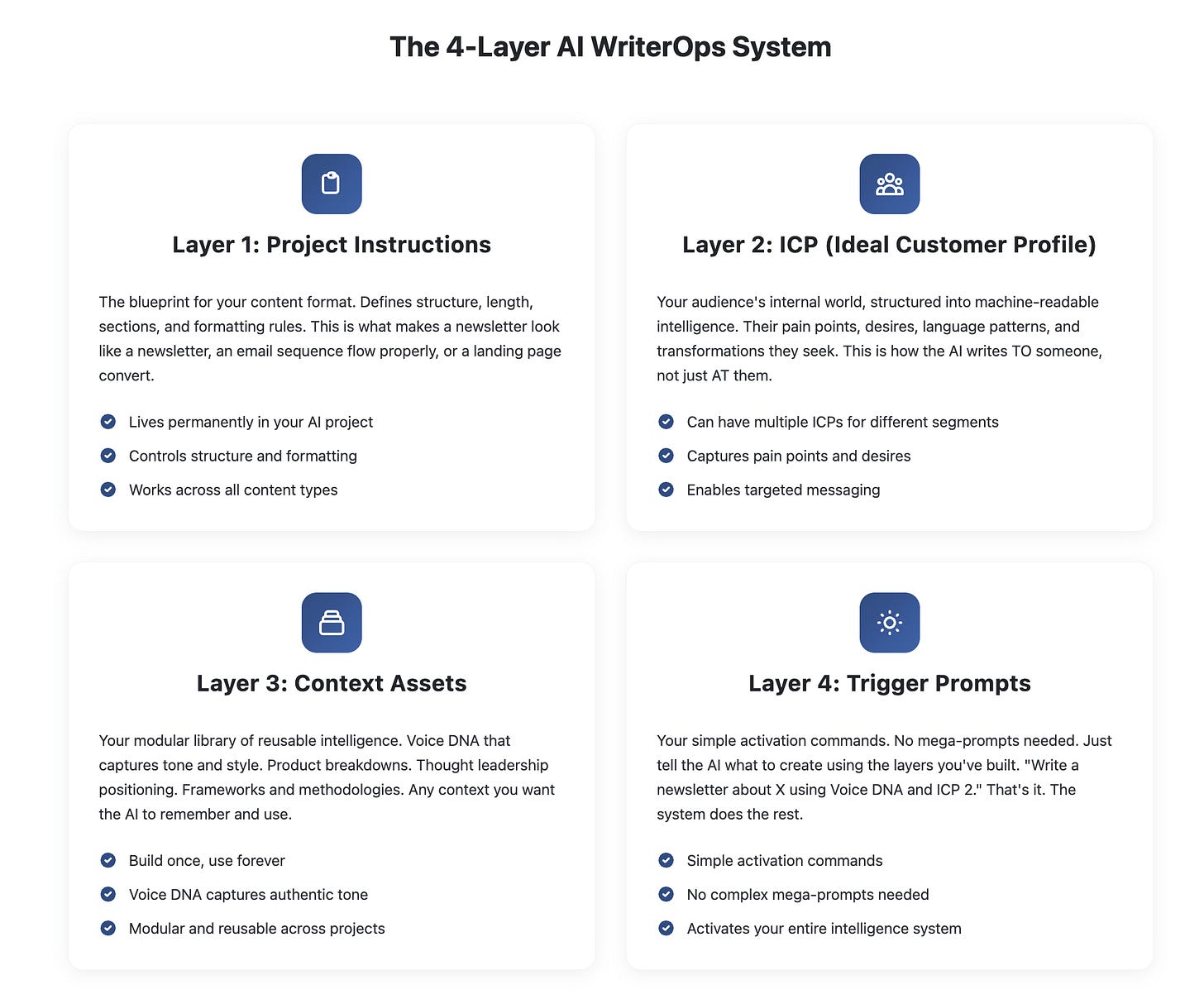

My 4-layer system for projects

If you've been following my work on context engineering, you know this framework.

Here's how I would apply it to ChatGPT Projects:

Layer 1: Project Instructions

This is your master blueprint that goes in the custom instructions field. It tells the AI exactly what this project is for and how to operate within it.

Layer 2: ICP (Ideal Customer Profile)

Who you're writing for. This goes in your project knowledge.

Include:

Demographics and psychographics

Pain points and desires

Language they use

Transformation they seek

Layer 3: Context Assets

These are your uploaded context files that also go in project knowledge.

They can include:

Voice DNA

Best performing examples

Brand guidelines

Product/service information

Frameworks and methodologies

Layer 4: Trigger Prompts

With all that context loaded, your prompts become simple:

"Write a newsletter about [topic]"

"Create 5 LinkedIn posts from this article"

"Draft an email sequence for [campaign]"

The heavy lifting is done by the context layers, not the prompt.

The same context system works in both ChatGPT and Claude. Build it once, deploy everywhere.

ChatGPT for professional work?

This is the feature ChatGPT needed to be actually useful for professional work. No more tab management. No more context repetition. No more lost conversations.

It's not as sophisticated as Claude's implementation — the memory system is more limited. But it's still a big deal for ChatGPT users.

The tools keep converging on what works. Persistent context is no longer optional.

Time to build your projects and put them to work.

— Alex

Founder: AI WriterOps | AI Disruptor

Helpful summary, Alex.

Can you explain why/when a business would use a Custom GPT vs. a "Project" approach to business problem solving? And do you ever use the custom GPT method in your work?

It would help to understand this distinction from the context of what you've summarized with ChatGPT's persistent context updates.

Thank you,

—Mike

I use customGPTs as my observer Node. Because they can be programed to act analytically and they are disconnected from your main memory. This allows a more objective review of work you complete in your main stack.